Abstract: In this paper, we present an approach to tactile pose estimation from the first touch for known objects. First, we create an object-agnostic map from real tactile observations to contact shapes. Next, for a new object with known geometry, we learn a tailored perception model completely in simulation. To do so, we simulate the contact shapes that a dense set of object poses would produce on the sensor. Then, given a new contact shape obtained from the sensor output, we match it against the pre-computed set using the object-specific embedding learned purely in simulation using contrastive learning.

This results in a perception model that can localize objects from a single tactile observation. It also allows reasoning over pose distributions and including additional pose constraints coming from other perception systems or multiple contacts. We provide quantitative results for four objects. Our approach provides high accuracy pose estimations from distinctive tactile observations while regressing pose distributions to account for those contact shapes that could result from different object poses. We further extend and test our approach in multi-contact scenarios where several tactile sensors are simultaneously in contact with the object.

Link paper.

Contact: Maria Bauza (bauza@mit.edu)

Related Publications

Summary video

Approach summary

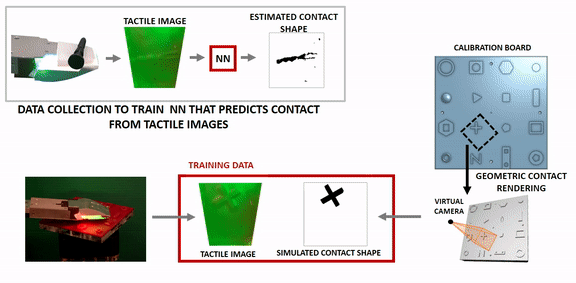

We estimate contact from a tactile image, and match it to a dense set of contacts to estimate the object pose.

Estimate contact shape from tactile images

We provide some basic code that computes given a tactile image, the resulting local contact as a heightmap, and the 3D models of the objects used during training. Code . We will soon make available data of tactile images and their corresponding simulated contact shapes.

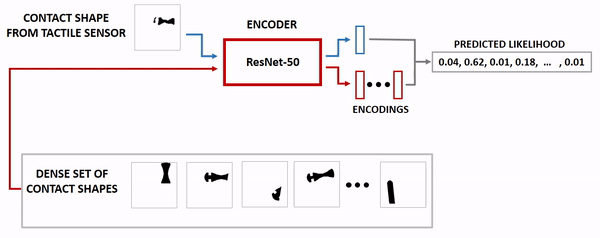

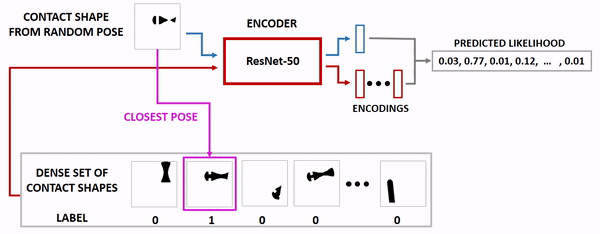

Training a similiarty function between contacts using contrastive learning

We use random contact poses to train a similarity function among contact shapes.

Test time: given a contact from the real sensor, we predict a distribution over contact poses

We match the estimated contact shape from the tactile sensor against a simulated dense set of contacts to predict a distribution over contact poses.